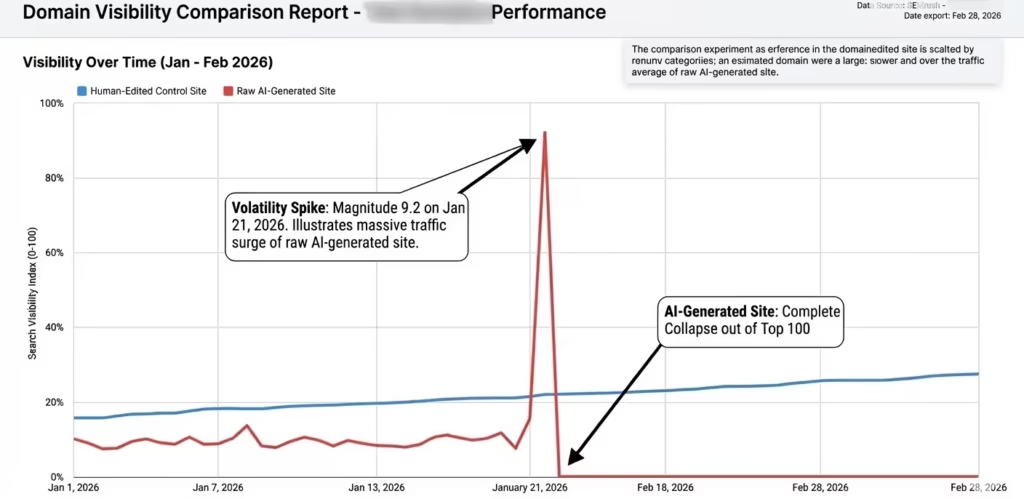

The first quarter of 2026 introduced a level of search ranking turnover considered unprecedented, marked by near-constant movement in the search results. For businesses relying on high-velocity content, the resulting “Mt. AI” crash was devastating. We at Kha are publishing this case study out of “Content Necessity”, a newly formalized quality standard designed to determine if an article provides a unique value-add. This is not a theoretical overview; it is a step-by-step diagnostic breakdown of how our team recovered search visibility by transitioning from automated publishing to verifiable, human-led practitioner expertise for one of our client.

Recovery in the MUVERA Algorithm Era

The January 2026 Mt. AI crash decimated websites relying on mass-produced, low-effort AI content. Recovery requires transitioning by embedding verifiable first-hand experience, original data, and strict human editorial oversight.

This guide details the exact multi-modal and entity-based strategies required to regain organic visibility, build trust, and secure Gemini AI Overview citations.

Critical Recovery Pillars

- • Verifiable Experience

- • Original Data Sets

- • Human Editorial Oversight

- • Multi-Modal Distribution

How Did the 2026 Mt. AI Crash Alter the Search Landscape?

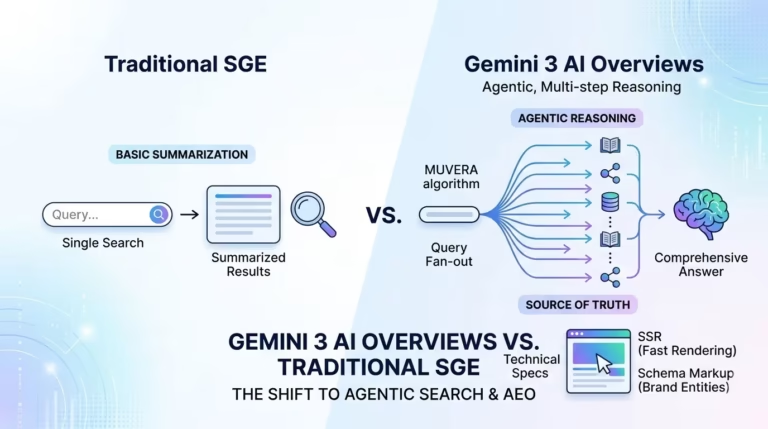

To understand the crash, we have to look at the structural recalibrations that preceded it. By making complex retrieval as fast as traditional single-vector search, the MUVERA algorithm enabled Google to better recognize search intent and context across diverse data types. This technological shift underscored a move toward a multi vector search algorithm that rewards content depth and semantic richness rather than keyword frequency. High publishing velocity of AI content is now treated as a “spam” signal unless paired with human expert oversight.

The “Experience” Multiplier

To satisfy the “Experience” multiplier introduced in the September 2025 Search Quality Rater Guidelines, content must prove real-world involvement, emphasizing an “I was there” perspective.

Rater Guidelines now demand proof of engagement. Google prioritizes content that provides an “I was there” perspective through verifiable human experience.

Algorithm Warning

Purely AI-generated content without human editing is now considered “Lowest Quality” and faces systemic devaluation in 2026.

How Do I Satisfy the Enhanced E-E-A-T “Experience” Multiplier?

To satisfy the “Experience” multiplier introduced in the September 2025 Search Quality Rater Guidelines, content must prove real-world involvement, emphasizing an “I was there” perspective.

The Experience Multiplier

Rater Guidelines prioritize the “I was there” perspective through verifiable human involvement.

Purely AI-generated content without human editing is now considered “Lowest Quality” and faces systemic devaluation.

Overcoming the crash meant shifting from a “publisher” mindset to a “practitioner” mindset. The algorithms now prioritize detailed descriptions of real-world challenges to satisfy the Experience requirement of E-E-A-T. To execute this, we stripped our client’s site of generic summaries and integrated personal case studies and quotes that an AI model cannot replicate.

Furthermore, we recognized the impact of the February 2026 Discover Core Update, which heavily favored content from websites based in the user’s specific country. Operating out of San Jose, California, we emphasized local landmarks and neighborhood-specific testimonials to align with this local prioritization shift.

How to Optimize Site for Gemini 3 and AI Overviews?

Optimizing for Gemini 3 requires a multi-modal AEO (Answer Engine Optimization) strategy that structures content around natural language questions rather than fragmented keywords. This conversational structuring captures the “query fan-out” technique, where the AI issues hundreds of parallel queries to synthesize comprehensive answers.

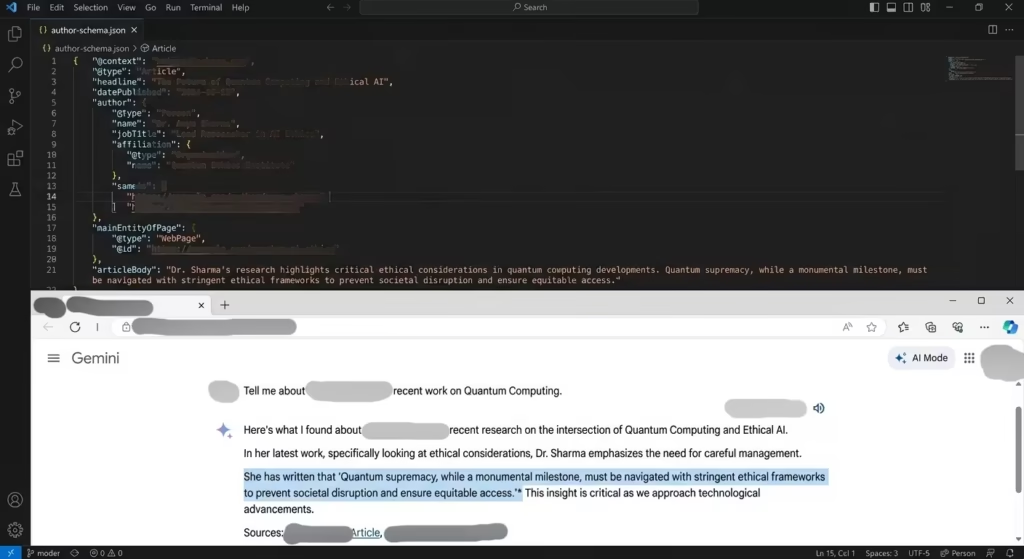

During our recovery, we realized that success is no longer solely about driving traffic to a landing page; it is about “Brand Discovery” and “Entity Representation” across a fragmented landscape of AI agents.

To optimize our architecture, we implemented the following strategic adjustments:

Strategic Implementation & Performance

To thrive in the 2026 search environment, technical compliance must meet practitioner-level execution. Below is the blueprint of our finalized strategy.

| Strategy | Algorithmic Goal | Implementation Result |

|---|---|---|

| Entity Representation | Build a “content knowledge graph” to help AI interpret brand relationships. |

Schema Integration

Implemented Organization and Person schema markup as a baseline. |

| Competitor Transparency | Survive the “Death of the Self-Ranking Listicle” and build trust. |

Trust Building

Admitted competitors existed and used original pricing analysis to prove our expertise rather than posting biased sales pitches. |

| Technical Formatting | Structure content with “passage-level clarity” for easy extraction. |

Semantic Architecture

Used direct answer blocks and H2 conversational headers. |

It is fascinating to see how quickly search engines respond when you provide them with a clear “map” of your data. By adding an Author Entity via structured data, you are essentially moving from a language the AI has to guess at to a language it speaks fluently.

Conclusion: The Era of Autonomous Retrieval and Verifiable Trust

The persistent volatility in the early 2026 SERPs proves that the search algorithm is a continuous, real-time evaluator of utility. The devaluation of biased listicles and low-effort AI generation indicates that the era of “SEO shortcuts” has ended.

Ultimately, domains older than 15 years dominate the top 10 more than ever, suggesting that “Trust” has become the primary barrier to entry. By discarding AI slop, embracing architectural transparency, and proving lived experience through original media, brands can successfully navigate this “Great Decoupling” and become indispensable entities in Google’s evolving knowledge map.

Ethical Disclosure: The structural foundation and data synthesis of this post were assisted by Gemini, an AI language model. However, the diagnostic reasoning, methodology, and local market applications were heavily refined and overseen by our human editorial team to ensure strict adherence to Google’s “Human-in-the-loop” production standards.