MUVERA (Multi-Vector Retrieval via Fixed Dimensional Encodings) is a Google algorithm that enables high-efficiency, multi-vector search by mapping complex data into single-vector Maximum Inner Product Search (MIPS).

Google MUVERA: Semantic Intelligence at Scale

Google Search Engine UpdateBy reducing latency by 90% while improving recall by 10%, it allows Google to recognize granular semantic nuance and intent across text, code, and images at scale.

90%Latency

Reduction10%Recall

Improvement

Why is MUVERA a “Content Necessity” in 2026?

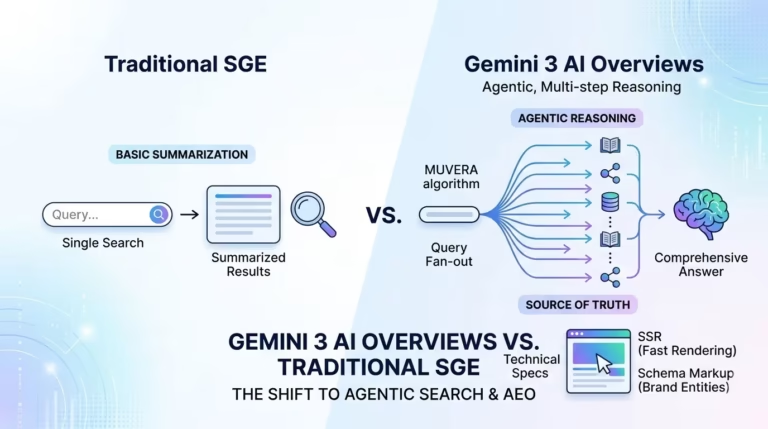

The trajectory of search between 2025 and 2026 represents a paradigm shift from traditional indexing to a unified system of neural retrieval. Previously, search engines relied on single-vector embeddings, which often sacrificed semantic nuance for speed. MUVERA bridges this gap, allowing Google to use complex models like ColBERT without prohibitive computational costs. This post provides a practitioner’s analysis of how to optimize for this “Deep Search” era.

How does MUVERA reduce search latency while improving accuracy?

The Architectural Foundation

MUVERA’s core innovation lies in Fixed Dimensional Encodings (FDEs). It utilizes randomized mappings to transform multi-vector sets into a format where their inner product mathematically approximates true multi-vector similarity, known as Chamfer similarity.

Asymmetric Encoding

Encodings are generated differently for queries and documents to maintain mathematical grounding.

Locality-Sensitive Hashing (LSH)

The algorithm partitions vector space into clusters using SimHash with random Gaussian vectors.

Averaging Mechanism

For documents, MUVERA employs an averaging mechanism to capture the asymmetric nature of similarity.

Performance Gains

These structural refinements result in a 90% reduction in latency across standard benchmarks like BEIR.

To visualize this, imagine a document about “Advanced Java Concurrency.” In the single-vector era, the document had one “point” in space. With MUVERA, every nuanced sub-topic—threading, memory leaks, and synchronization—has its own vector footprint that Google can navigate instantly.

Why is “Semantic Richness” now more important than Keyword Frequency?

MUVERA rewards content depth and semantic richness rather than traditional keyword density. Because the algorithm can recognize intent across diverse data types (text, images, and code), thin content is increasingly devalued.

Comparison: Traditional SEO vs. MUVERA Era

| Feature | Traditional Indexing (Single-Vector) | MUVERA (Multi-Vector) |

|---|---|---|

| Primary Metric | Keyword Match/Frequency | Semantic Nuance & Intent |

| Retrieval Type | Latent Space Mapping | Fixed Dimensional Encodings (FDE) |

| Speed/Efficiency | Baseline | 90% Latency Reduction |

| Content Goal | Targeted Keywords | Content Necessity & Unique Value |

How do I optimize for Gemini 3 and “Query Fan-Out“?

The integration of the Gemini 3 model family has introduced “Agentic” search. Gemini 3 utilizes a technique called “query fan-out,” where the system issues hundreds of parallel queries to synthesize a single comprehensive answer with diverse citations.

Gemini 3: Agentic Search Strategies

Use natural language questions (e.g., “How do I [Topic]?”) to capture citations in Gemini’s multi-step reasoning.

Content must be structured so that individual paragraphs can stand alone as answers to specific sub-queries.

Use structured data (schema markup) to build a “content knowledge graph” that helps AI interpret relationships between your brand and topics.

Overcoming the “Trust Gap” and the “Mt. AI” Pattern

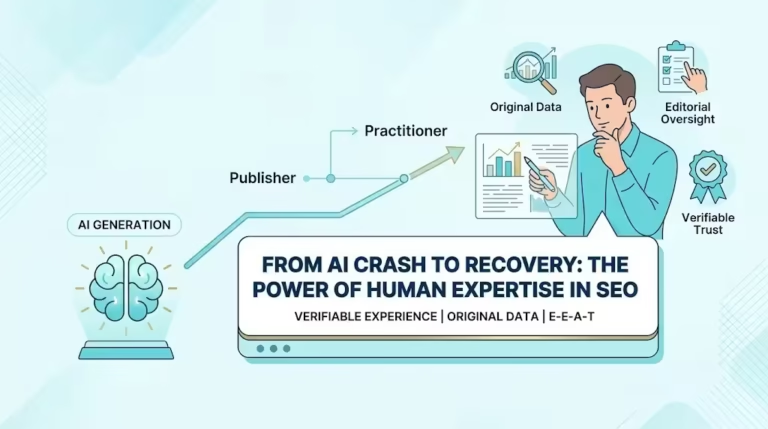

The 2026 search environment is defined by a high barrier to entry. The “Mt. AI” pattern describes sites that use low-effort AI content to see a rapid surge in rankings, followed by a vertical crash once Google’s quality classifiers identify the material as “AI slop”.

Understanding the “Mt. AI” Pattern

The search landscape no longer rewards volume. Quality classifiers in 2026 are designed to detect “hollow” authority, leading to swift, permanent de-indexing of sites that prioritize algorithmic speed over human substance.

The 15-Year Rule

Domains older than 15 years currently dominate the top 10, while domains under two years old hold less than 2% of top rankings. Longevity has become a proxy for inherent trust.

Experience (E) Multiplier

Content must prove real-world involvement (“I was there”) through original photos, case studies, and first-hand narratives that AI cannot synthesize.

Competitor Transparency

Avoid “Review Ransom” or biased listicles. Admitting competitors exist and using independent testing builds the “Trust” (T) pillar of E-E-A-T.

Conclusion: Becoming the “Source of Truth”

The era of SEO shortcuts has ended. In the MUVERA and Gemini 3 landscape, success is defined by “Brand Discovery” and “Entity Representation”. To remain visible, brands must move from being “publishers” of information to “practitioners” of expertise, providing the unique value-add that Google’s autonomous retrieval systems demand.